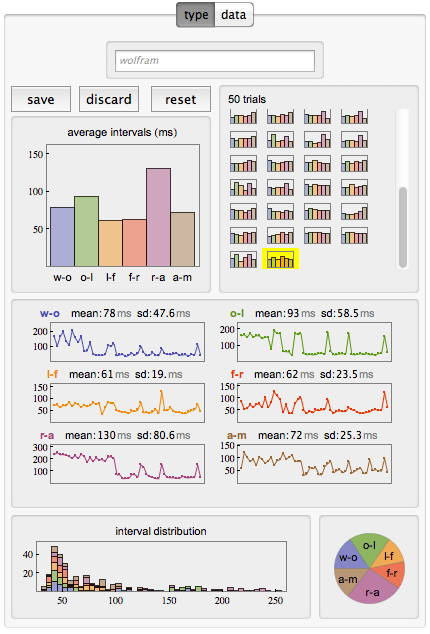

A few weeks ago Paul-Jean Letourneau posted an article on Wolfram’s Blog about using Mathematica to collect and analyze keystroke metrics as a way to identify individuals. The article analyzes how you type, measuring the time intervals between your typing the individual characters using a little interactive widget, collecting and visualizing the data while you repeatedly type in the word “wolfram”.

It is somewhat interesting at this point to analyze one’s one typing style. For example there appears to be a bi-modal distribution of the time intervals between keystrokes, with the sequence “r-a” taking me almost twice as long (~130ms) as most other sequences (~60-70ms). There is also a ‘learning’ effect visible in my 50 trials, where the speed improves noticeably after about 20 repetitions or so. However, there are occasional relapses into a much slower typing pattern throughout the rest of the trials.

However, what I thought was more interesting is the subsequent analysis the author did across a set of 42 such series he obtained from his colleagues (noting humorously that “it just so happens that Wolfram is a company full of data nerds”). He then proceeds to analyze and visualize that data in various ways.

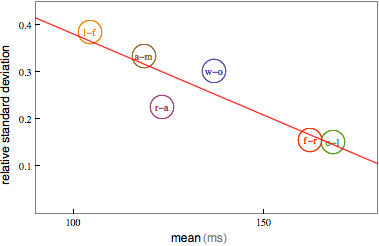

He observes the bimodal nature of the distribution with peaks around 75ms and 150ms for different pairs of characters. In fact, averaging over all those pair typing times, a correlation is found indicating that when people type slower they are more consistent.

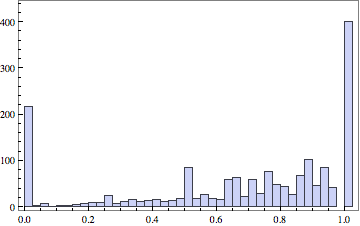

The analysis continues with the observation that each measurement can be seen as a point in a six-dimensional space (six pair-transitions in a word with seven characters). When a person types this same word 50 times you get a cluster of 50 points in six-dimensional space. Different individuals will produce different clusters. So one can use the (built-in) function FindClusters to determine such clusters. However, since people have a certain amount of inconsistency in their typing, it is possible that sometimes one person’s typing will show up in another person’s cluster and vice versa. To measure the quality of the clusters to distinguish individuals, one can implement various measures. The author implements the Rand-index, a measure of the similarity between two data-clusterings. This gives a numeric accuracy on a scale from 0 to 1 for the ability to distinguish between a pair of two people. When looking across all pairs of 42 people – there are 21*41=861 different pairs, but the author chose to look at all 42*42=1764 pairs, as the FindCluster results depend on the sequence input data, so Rand[i,j] may be different from Rand[j,i] – you get the following histogram of Rand quality scores:

This clearly shows that keystroke metrics for one word are not sufficient to reliably distinguish between arbitrary pairs of people. The average quality score is only 0.67. On the other hand, about 400 (~23%) of those quality scores are a perfect 1.0, so for about a quarter of the pairs it alone would suffice to reliably distinguish the two people typing. About half as many scores are 0.0, meaning that the clusters overlap so much that no distinction is possible. The remaining scores are distributed mostly between 0.5 and 1.0, meaning you would just guess right more often than wrong.

The author wraps up the post with this paragraph:

Using this fun little typing interface, I feel like I actually learned something about the way my colleagues and I type. The time to type two letters with the same finger on the same hand takes twice as long as with different fingers. The faster you type, the more your typing speed will fluctuate. The more your typing speed fluctuates, the harder it will be to distinguish you from another person based on your typing style. Of course we’ve really just scratched the surface of what’s possible and what would actually be necessary in order to build a keystroke-based authentication system. But we’ve uncovered some trends in typing behavior that would help in building such a system.

An interactive CDF widget embedded in the article allows you to collect and visualize the timing of your own typing. Source code as well as the test data is also shared if you want to further explore the details of this interesting analysis.