On the Strategic Project and Portfolio Management Blog by Simon Moore one can find many fascinating stories about project failures as well as a related collection of project management case studies. One entry there links to a project management method NASA is mandating internally since 2009 to estimate costs and schedule of their various aerospace projects. The method is called Joint Confidence Level – Probabilistic Calculator (JCL-PC). It’s a sophisticated method using historical data and insight into estimation psychology (like optimism bias) to arrive at corrective multipliers for project estimates based on project completion percentages with required confidence level. It’s also using Monte Carlo simulations to determine outcomes, leading to scatterplots of the simulated project runs on a Cost-vs.-Schedule plane. From there one can determine estimates with for example 70% confidence levels for what the cost and schedule overruns will likely be.

If you’re either already familiar with the method or if you are very good at abstract thinking the above paragraph will have meant something to you. If it didn’t, bear with me. In this post I make a brief attempt to explain what I understood about the method using the data visualizations from two sources (a 100+ page report and a 12 page FAQ). The report is fascinating on many levels, as it deals with the history of high-profile project overruns (Apollo program, Space Shuttle, Space Station) and the pervasive culture of under-estimation (optimism bias) through not accounting for project risks that are unknown, but historically evident.

JCL starts with historical observations of similar projects with regards to cost and schedule overruns. For example, the above cited report contains best fit histogram distributions for robotic missions.

The idea is to use a set of such distributions for probabilistic estimates of cost and schedule. The set of distributions needs to account for the fact that in the early stages of a project there are more unknowns and as such higher risk of overruns. From the report:

The JCL-PC estimating method is based on the hypotheses that in the beginning phases of a project there are many unknown risks – and over time the project will have a high probability of exceeding estimated costs and scheduled duration. … Work as it was initially planned will inevitably change. Quantifiable risks become clearer and NASA’s S-Curves will tend to lay down as the work goes forward. Keep in mind that it’s not the project that is becoming inherently riskier. It’s a matter of participants fully identifying the real work that was “out there” all along. Even though the scope of the work wasn’t fully perceived “back when” – progress has continued to identify the risks and quantify the corrective actions. History is written in real time and that history differs to a greater or lesser degree from what was anticipated. The JCL-PC helps us better plan for and manage that difference.

The JCL-PC method strikes a needed balance between subjectivity and anticipated risk variability leaving only one remaining probability influence factor to deal with. – namely, assigning the percentage complete of the subject project. This % complete factor includes both subjective and objective elements.

One of the key elements is the notion of a multiplier which implements this reduced-uncertainty-over-time as well as a so called optimism corrector and other project risk in line with historical aerospace project overruns. The multiplier is plotted below as a function of the project % complete parameter for different confidence levels:

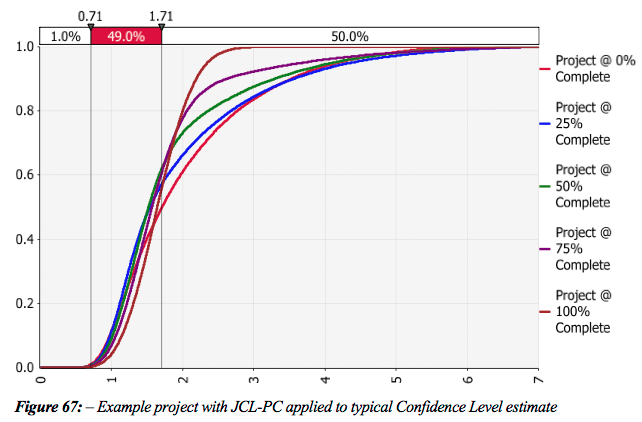

The concept is illustrated via two charts of a fictitious $1m project (applied here to cost overruns, but equally applicable to schedule overruns): The first shows a point estimate and it’s S-curves (confidence bands) per project % complete.

The second shows the S-Curves after applying “the optimism corrector and some minor project risk, through a more typical project life cycle with project scope creep … As the project evolves the S-Curve moves slightly to the right and becomes more and more vertical.”

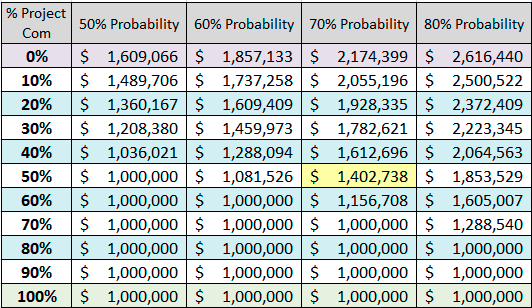

It would be great to have an interactive graphic where the S-Cruves are plotted in response to sliding the project % complete between 0% and 100%. The report lists the above multipliers in a numerical table spanning project % complete (in 1% increments) and four confidence levels (50%, 60%, 70%, 80%). Rather than copying the entire table I filtered this down to just 10% increments in project % complete. This table tells NASA officials at various confidence levels, how much money they will have to spend for a $1m project as a function of project % complete:

The data point highlighted in yellow is described as follows:

When the project is 50% complete, you’ll notice that a 50% confidence level suggests that the project can be completed for the anticipated $1,000,000. However, if we adhere to the NASA standard of a 70% confidence level, we see that another $400,000+ will likely be needed to complete the project. No matter how well a project is managed, it rarely compensates for ultra- optimistic budget estimates that sooner or later return with a vengeance and overcome the most skillful leaders.

As a final illustration the FAQ document includes this scatterplot as JCL-PC output:

A Frontier Curve represents all possible combinations of cost and schedule that will give you a percent JCL. The plot shows the Frontier Curve for a 70% JCL in yellow. The green dots are simulated runs with outcomes below the selected cost and schedule (blue cross-hair, yellow labels). White dots have either cost or schedule overruns, red dots have both.

The report makes bold claims about the potential of JCL-PC, but also about the challenges inherent in attempting to change an entire management culture. I am not qualified to comment on these claims, but my impression is that such probabilistic project management methods will raise the bar in the field and should lead to more accurate estimates.

The more I think about such abstract concepts, the more I’m convinced that mental models are inherently visual. We remember some key visualizations or charts and anchor our understanding of the concept around those visual images. We also use them to communicate or teach the concepts to each other – hence the value of the whiteboard or even the napkin drawing. As such, the increasing computational ability to produce such visual images and ideally even interactive graphics is an important element of academic and scientific endeavors.